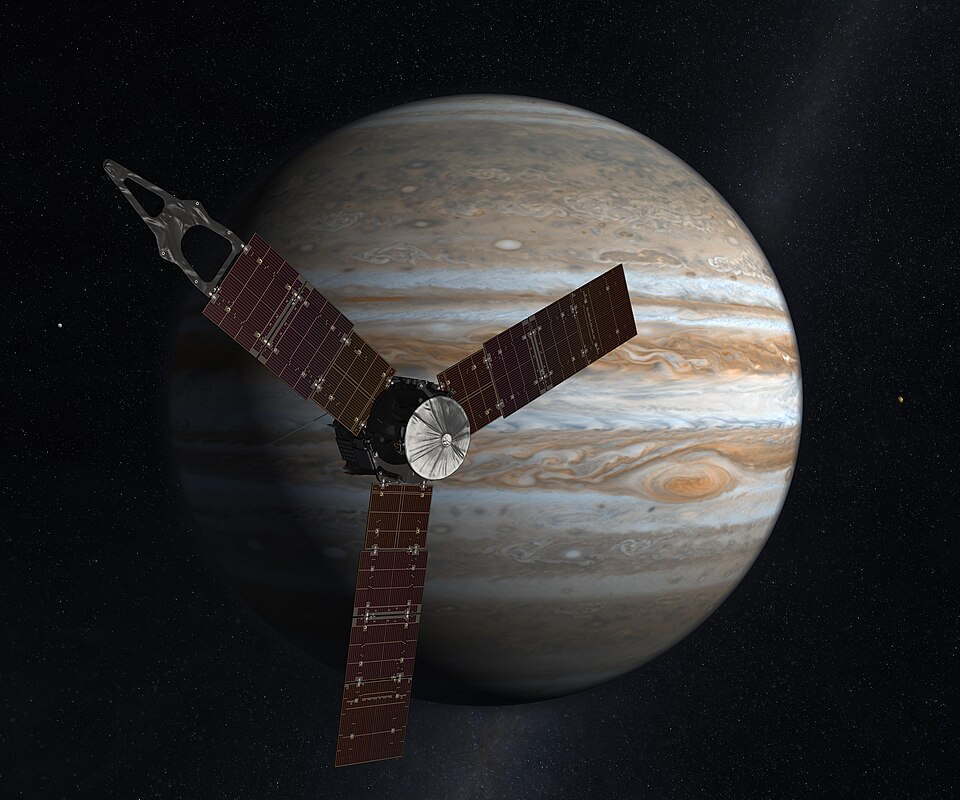

NASA’s Juno spacecraft, orbiting Jupiter since 2016, continues to deliver surprising discoveries about the largest planet in our solar system. Data from the mission, announced in early 2026, reveals that Jupiter experiences lightning storms vastly more powerful than any seen on Earth, with individual bolts carrying up to 10 trillion joules of energy. These findings add to a growing catalog of discoveries from the mission, which has fundamentally changed our understanding of gas giant planets.

The discovery of extreme lightning came from analysis of Juno’s Microwave Radiometer, which detected 613 microwave pulses from lightning over 12 flybys between 2021 and 2022. Each pulse represents a discharge hundreds of times more powerful than typical terrestrial lightning. The largest events contain energy equivalent to approximately 2,400 tons of TNT, roughly one-sixth the energy of the Hiroshima atomic bomb.

Jupiter’s atmosphere produces these powerful storms in ways that differ from Earth’s. On our planet, lightning requires the separation of electric charges in water-based storm clouds. Jupiter’s atmosphere contains water clouds at depths where pressures exceed several bar, but the precise charge separation mechanism remains under investigation. Ammonia clouds may play a role in Jupiter that water clouds play on Earth.

The detection of lightning at polar latitudes surprised researchers, who had expected such activity to be limited to equatorial regions. The storms occur in both polar vortices and in the belts that characterize Jupiter’s atmospheric circulation, suggesting that the underlying mechanisms operate across a wider range of conditions than previously recognized.

Other Juno discoveries from early 2026 include the most powerful volcanic eruption ever observed on Io, Jupiter’s innermost moon. The event surpassed all previous records for volcanic output on that moon, which holds the distinction of being the most volcanically active body in the solar system. The observation demonstrates that Io’s interior remains vigorously active, driven by tidal heating from its interaction with Jupiter and the other Galilean moons.

Juno’s measurement of Europa’s ice shell thickness revealed an average of approximately 29 kilometers over half the moon’s surface, providing critical data for missions planning to explore the subsurface ocean. The ice shell represents the barrier between the surface and the ocean that may contain liquid water, and understanding its thickness affects how future missions might access that ocean.

A February 2026 announcement revised Jupiter’s measured dimensions. Using radio occultation data from 13 flybys, Juno revealed that Jupiter is approximately 8 kilometers narrower at the equator and 24 kilometers flatter at the poles than previous estimates from the 1970s Pioneer and Voyager missions. These refinements improve models for understanding Jupiter’s interior structure and for interpreting observations of exoplan gas giants.

Despite these achievements, Juno’s future remains uncertain. NASA considered terminating the mission in its FY2026 budget, citing the approximately $260 million annual cost. The spacecraft remains healthy as of April 2026, but no decision has been announced about mission extension beyond the current phase.

Lightning on gas giants occurs in atmospheres composed primarily of hydrogen and helium, with trace amounts of water, ammonia, and other compounds. The electrical properties of these atmospheres differ from Earth’s water-based clouds, where charge separation occurs as water droplets collide and freeze.

The energy in Jupiter’s lightning reflects the planet’s immense size and the scale of atmospheric dynamics. The Great Red Spot, a storm larger than Earth, demonstrates the energy available in Jupiter’s atmosphere. Convective updrafts in the belts and zones drive the circulation that produces electrical activity.

Juno’s Microwave Radiometer detects lightning at wavelengths around 1.4 centimeters, where the instrument can peer hundreds of kilometers deep into Jupiter’s atmosphere. This penetration depth allows detection of lightning from depths where water clouds exist, at pressures exceeding several bar. Radio wavelengths also pass through the cloud layers that would obscure optical detection.

The detection of powerful lightning has implications for the interior energy balance of Jupiter. Lightning requires energy from atmospheric dynamics, which in turn reflects heat from Jupiter’s interior. The power output of lightning storms contributes to the overall energy budget that Juno has measured from orbit.

Subscribe to blog posts using RSS

Subscribe to blog posts using RSS